- Recommendations

- Cybersecurity Is Becoming an Operational Systems Problem

- AI-Driven Phishing Is Changing the Economics of Cyberattacks

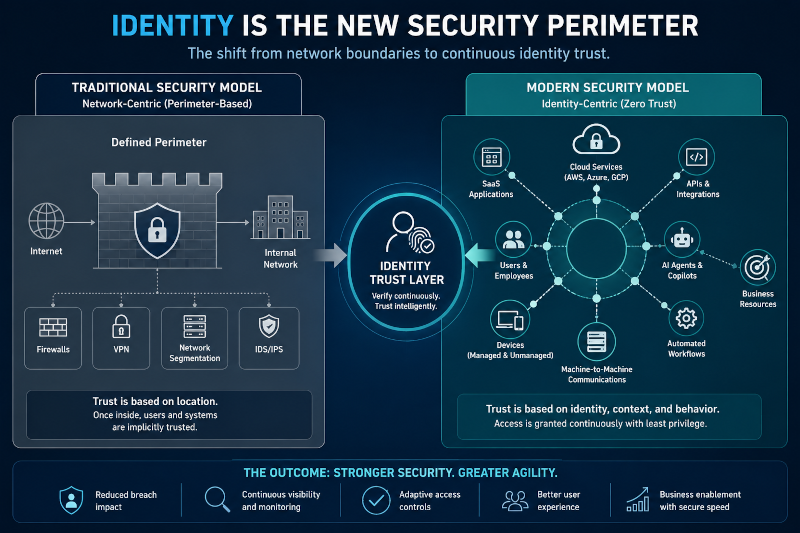

- Identity Security Is Replacing Perimeter Security

- AI Systems Are Becoming Part of the Attack Surface

- Operational Resilience Is Becoming More Important Than Prevention Alone

- Cybersecurity Is Becoming a Real-Time Governance Challenge

Recommendations

- Treat identity systems as operational infrastructure rather than isolated authentication tools.

- Deploy phishing-resistant authentication for privileged accounts and high-risk workflows first.

- Monitor session activity continuously instead of relying solely on login-based security controls.

- Integrate AI governance into cybersecurity programs before AI deployment scales broadly across departments.

- Reduce operational complexity by consolidating fragmented SaaS access, identity systems, and vendor permissions.

- Build incident response plans around operational continuity, not just technical containment.

Cybersecurity Is Becoming an Operational Systems Problem

Enterprise cybersecurity is no longer defined primarily by firewalls, endpoint software, or perimeter defense models. In modern cloud and hybrid environments, identity increasingly functions as the core security boundary, while operational coordination determines how effectively organizations respond when those boundaries fail.

This shift is changing the nature of enterprise risk itself.

Modern organizations now operate across interconnected ecosystems of SaaS platforms, APIs, contractors, cloud services, AI copilots, third-party integrations, and machine-to-machine workflows that evolve faster than traditional governance structures can adapt. Security teams are no longer simply protecting networks. They are protecting operational trust across distributed digital systems.

The challenge becomes even more complex as AI accelerates both attack sophistication and organizational dependency on automated workflows. Generative AI systems now assist attackers with phishing, impersonation, reconnaissance, malware adaptation, and social engineering at unprecedented scale.

Research from the Microsoft Digital Defense Report 2024 noted that identity compromise, cloud credential attacks, AI-enabled targeting, and post-authentication attacks are becoming increasingly central across the modern threat landscape. Microsoft reported observing more than 600 million daily identity attacks while warning that attackers increasingly target sessions, tokens, and authenticated workflows rather than passwords alone.

This transformation mirrors a broader operational shift explored previously in Cybersecurity Is No Longer Just a Technical Problem, where cybersecurity increasingly overlapped with governance, workflow coordination, and organizational design rather than isolated technical infrastructure.

The organizations struggling most with cybersecurity today are often not the ones lacking technology. Increasingly, they are the organizations operating inside fragmented operational environments where visibility, accountability, and coordination break down faster than security systems can adapt.

Recommendation: Treat identity systems, operational workflows, and SaaS access governance as part of core enterprise infrastructure rather than isolated IT functions.

AI-Driven Phishing Is Changing the Economics of Cyberattacks

One of the most important cybersecurity shifts underway is the industrialization of phishing through generative AI.

Attackers no longer need to manually craft convincing phishing campaigns or rely on generic templates filled with spelling mistakes and obvious formatting issues. AI systems can now generate highly personalized messages that imitate writing styles, business terminology, internal workflows, and organizational context with alarming accuracy.

The result is not simply more phishing. It is phishing operating at machine scale.

Research published in 2024 examining AI-enabled spear phishing found that fully automated AI phishing campaigns performed comparably to human-crafted phishing attacks while dramatically reducing the time and cost required to execute large-scale targeting operations.

This changes the operational dynamics of enterprise defense significantly.

Traditional awareness training increasingly struggles because modern phishing attacks often resemble legitimate internal communication rather than suspicious external messages. AI-generated phishing can adapt tone, urgency, organizational language, and behavioral patterns dynamically across targets.

The MGM Resorts and Caesars Entertainment breaches demonstrated how dangerous this environment has become. Attackers associated with Scattered Spider reportedly used social engineering techniques against IT support personnel to gain access to internal systems, ultimately contributing to operational disruption and major financial losses. MGM later disclosed the incident resulted in roughly $100 million in financial impact.

The incident exposed a broader reality affecting many enterprises: modern cyberattacks increasingly target human coordination systems rather than technical vulnerabilities alone.

Microsoft report similarly noted that post-authentication attacks, MFA fatigue campaigns, adversary-in-the-middle techniques, and identity-focused social engineering continue growing as attackers exploit predictable human behaviors inside enterprise workflows.

Organizations are therefore confronting a larger strategic challenge: human awareness alone is no longer sufficient as a primary defensive layer when AI systems allow attackers to scale highly personalized deception continuously.

Recommendation: Prioritize phishing-resistant authentication and identity verification workflows for help desks, administrators, and high-risk operational roles first.

Identity Security Is Replacing Perimeter Security

For decades, enterprise cybersecurity depended heavily on the assumption that networks, devices, and internal infrastructure could be segmented and protected through perimeter controls.

That model increasingly fails inside cloud-native operational environments.

Modern organizations now operate across:

- SaaS ecosystems,

- remote work environments,

- APIs,

- contractors,

- AI copilots,

- mobile devices,

- and machine-driven workflows that continuously cross traditional network boundaries.

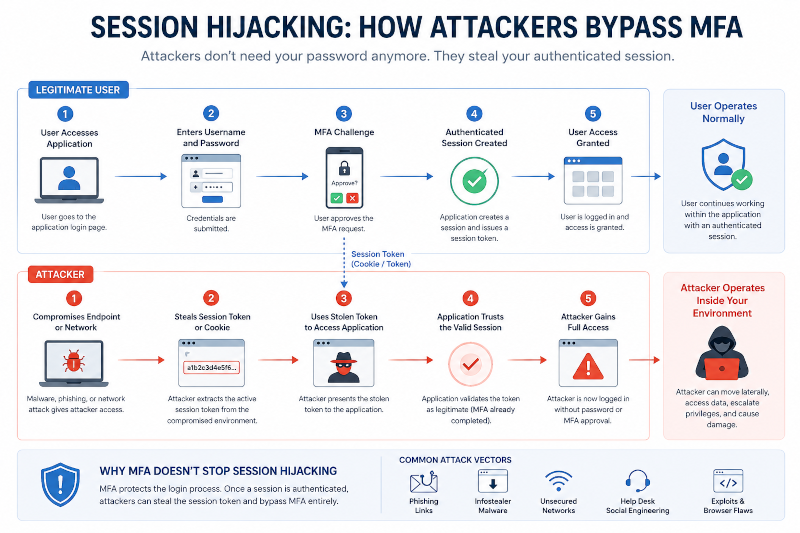

As a result, attackers increasingly target authenticated sessions instead of passwords themselves.

Infostealer malware now captures browser sessions and authentication tokens directly from endpoints. Adversary-in-the-middle frameworks intercept authentication flows in real time. MFA fatigue attacks exploit user behavior through repeated approval requests until employees eventually authorize malicious access.

Once attackers obtain authenticated session access, traditional MFA controls often become ineffective because authentication has technically already occurred.

Microsoft’s Digital Defense Report noted that while password attacks still dominate identity compromise activity, post-authentication attacks involving token theft, consent phishing, and session hijacking are becoming increasingly operationally significant.

This is one reason many enterprises are now shifting toward:

- continuous authentication,

- behavioral analytics,

- session monitoring,

- device trust validation,

- and phishing-resistant authentication standards such as FIDO2 security keys.

The broader shift is strategic rather than purely technical.

Cybersecurity increasingly becomes the continuous validation of operational trust rather than a one-time authentication event.

This overlaps closely with themes explored previously in The Future of Operational Intelligence in AI-Driven Organizations, where operational systems increasingly depended on real-time visibility, adaptive monitoring, and continuous coordination rather than static control environments.

Recommendation: Monitor authenticated sessions continuously and reduce reliance on one-time login verification as the primary security boundary.

AI Systems Are Becoming Part of the Attack Surface

As enterprises deploy AI copilots, automation platforms, retrieval systems, and machine-learning workflows more broadly, AI infrastructure itself increasingly becomes part of the operational attack surface.

This introduces entirely new cybersecurity risks.

AI systems now influence:

- fraud detection,

- workflow routing,

- customer support,

- anomaly monitoring,

- document analysis,

- operational prioritization,

- and enterprise decision support environments.

The more operationally embedded these systems become, the more attackers gain incentive to manipulate them.

Two major attack patterns are becoming increasingly important.

The first involves data poisoning, where attackers intentionally manipulate training data or input streams to gradually influence AI behavior over time. The second involves evasion attacks, where subtle modifications to inputs cause AI systems to misclassify malicious activity as legitimate.

Research from the Stanford AI Index Report 2024 emphasized that AI systems are rapidly expanding into operational and enterprise environments faster than governance, accountability, and security frameworks are evolving around them.

This creates a growing operational dependency problem.

Organizations increasingly rely on AI-generated outputs while often lacking:

- visibility into model behavior,

- governance standards,

- retrieval integrity,

- auditability,

- and verification workflows capable of validating machine-assisted decisions consistently.

The risk is not simply incorrect AI output. It is operational dependence on systems whose reliability may degrade invisibly over time.

“The risk is no longer simply flawed AI output. It is organizational dependence on systems whose reliability may degrade invisibly over time.”

The boarder coordination risks are highlighted in Why Organizational Knowledge Disappears Faster Than Companies Realize, where fragmented knowledge systems quietly weakened operational continuity and decision quality long before failures became visible organizationally.

The organizations deploying AI most effectively may not necessarily be the ones automating the largest number of workflows. Increasingly, they may be the organizations building the strongest governance structures around AI-assisted operational environments.

Recommendation: Secure AI data pipelines, retrieval systems, and operational inputs with the same rigor applied to production infrastructure and privileged systems.

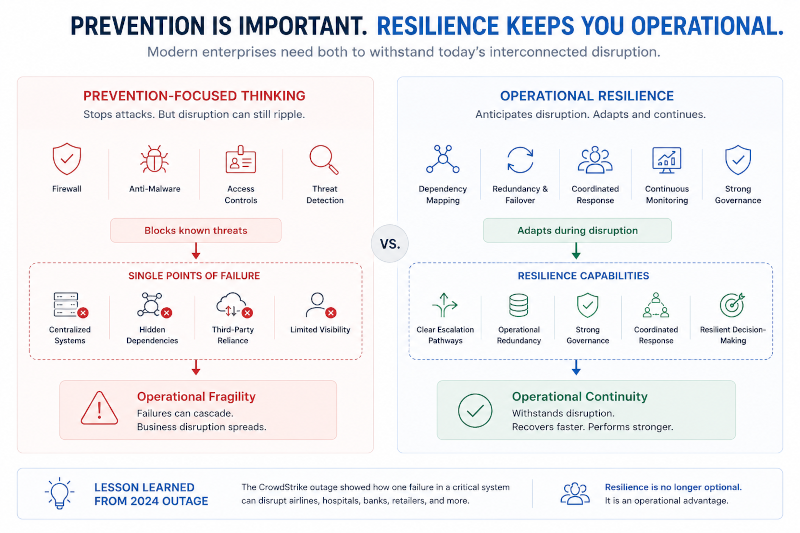

Operational Resilience Is Becoming More Important Than Prevention Alone

Many enterprises still approach cybersecurity primarily through prevention-focused thinking which includes blocking intrusions, stopping malware and preventing compromise. Those objectives remain important.

But modern operational environments increasingly require resilience alongside prevention.

The 2024 CrowdStrike outage demonstrated how deeply interconnected digital ecosystems can create cascading operational disruption even when incidents do not originate from malicious attacks. A faulty software update ultimately disrupted airlines, hospitals, banks, emergency services, retailers, and enterprise operations globally.

The incident exposed a broader structural reality: many organizations now depend heavily on centralized digital infrastructure without fully understanding systemic dependency risk across operational environments.

The Cloud Security Alliance later noted that the outage revealed weaknesses in:

- change management,

- incident response planning,

- dependency mapping,

- and operational continuity coordination across interconnected enterprise systems.

This shift matters because cybersecurity failures increasingly create operational consequences extending far beyond breached infrastructure itself.

Modern enterprises depend on interconnected identity systems, cloud providers, endpoint platforms, AI services, APIs, third-party vendors, and shared operational ecosystems that can amplify disruption rapidly when failures cascade across environments.

Cyber resilience therefore increasingly becomes: an operational coordination capability.

The organizations that recover fastest are often not simply the organizations with the strongest technical controls. Increasingly, they are the organizations with:

- clear escalation pathways,

- operational redundancy,

- strong governance,

- coordinated response structures,

- and resilient decision-making systems under pressure.

Recommendation: Build incident response plans around operational continuity and dependency mapping rather than focusing exclusively on technical containment procedures.

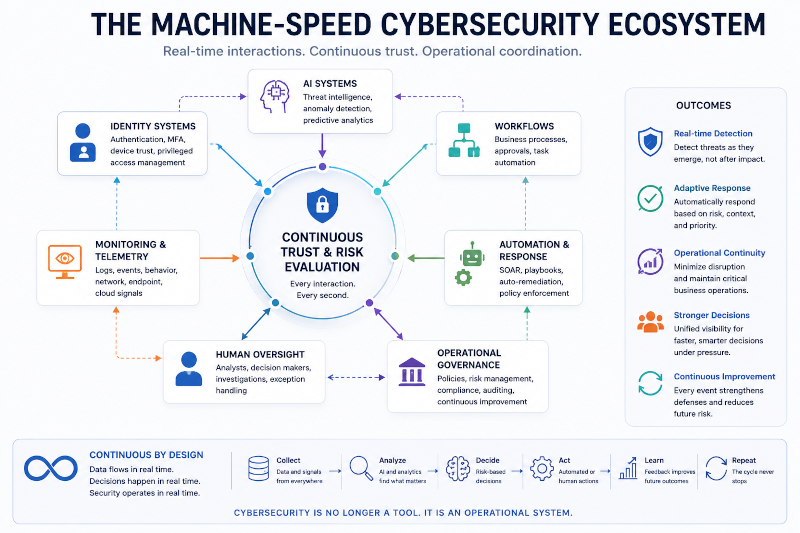

Cybersecurity Is Becoming a Real-Time Governance Challenge

The long-term cybersecurity challenge is no longer simply preventing intrusion. It is governing identity, automation, AI systems, workflows, and operational trust continuously across increasingly dynamic enterprise environments.

Modern organizations increasingly operate inside machine-speed ecosystems where AI systems generate recommendations, workflows adapt automatically, third-party integrations exchange data continuously, and operational decisions occur across distributed digital infrastructure in real time. As these environments become more interconnected and automated, cybersecurity governance is evolving from a static compliance function into a continuous operational discipline focused on managing trust, visibility, coordination, and decision integrity across rapidly changing systems.

The strongest cybersecurity programs increasingly combine:

- identity security,

- operational visibility,

- AI governance,

- workflow coordination,

- incident response,

- and organizational resilience into unified operational systems rather than isolated security functions.

Organizations that continue treating cybersecurity primarily as perimeter defense may increasingly struggle as operational complexity expands.

Organizations that redesign governance structures around adaptive operational environments, however, may gain long-term advantages because security becomes embedded into coordination systems themselves rather than layered on top after deployment.

In the emerging enterprise environment, cybersecurity is no longer simply about protecting infrastructure. It is increasingly about governing how identity, data, automation, and operational decision systems interact continuously at machine speed.

Recommendation: Redesign cybersecurity governance around continuous operational trust, visibility, and coordination rather than static perimeter assumptions alone.